In the not-so-distant past, computers had only one brain, called a CPU, which did all the thinking and processing tasks. However, as technology advanced, making a single brain work faster became challenging. So, clever folks decided to add more brains to a computer, creating what we call multicore processors. Imagine a team of workers tackling a big job together instead of one person doing it all alone. This teamwork makes the computer faster and more powerful. In this text, we’ll explore OpenMP, a tool that helps programmers make these multiple cores work together efficiently, improving how programs run on modern computers with many cores. Let’s take a look at how to do it.

Parallel Programming

Parallel programming is a programming technique in which multiple tasks are executed simultaneously to improve the performance of a program. This is especially important in the context of modern computer architectures, where processors commonly have multiple cores. Parallel programming can be achieved through various models, and OpenMP is one such widely used model for shared-memory parallelism.

What is OpenMP?

OpenMP (Open Multi-Processing) is an open-source API (Application Programming Interface) that supports multi-platform shared memory multiprocessing programming in C, C++, and Fortran. It enables developers to write parallel code that can take advantage of multi-core processors, enhancing the performance of their applications, in an extremely simple way.

Basics of OpenMP

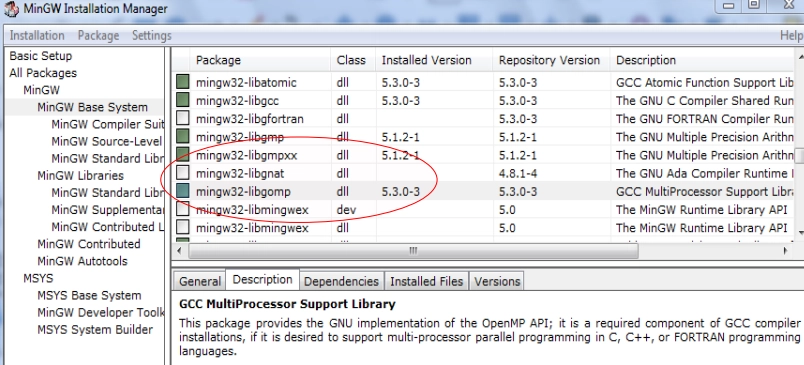

Before I start, it’s important to know that openMP doesn’t always come with your C/C++ compiler. On Windows, if you are using MinGW, you’ll need to find the following option and enable it during instalation:

For Linux and MacOS, it is usually included with your system.

1. Pragma Directives:

OpenMP uses pragma directives to indicate parallel regions in the code. Pragma directives are special comments that the compiler interprets to guide the parallelization process. The basic pragma directive for parallelizing a block of code is #pragma omp parallel.

#include <omp.h> //Necessary header to work with openMP

#include <stdio.h>

int main() {

#pragma omp parallel

{

// Code inside this block will be executed in parallel

printf("Hello, world!\n");

}

return 0;

}2. Parallel For Loop:

One common use of parallel programming is parallelizing loops. OpenMP provides a for pragma to parallelize loops easily.

#include <omp.h>

#include <stdio.h>

int main() {

const int n = 10;

int sum = 0;

#pragma omp parallel for reduction(+:sum)

for (int i = 1; i <= n; ++i) {

sum += i;

}

printf("Sum: %d\n", sum);

return 0;

}In this example, the reduction(+:sum) clause is used to safely perform the reduction operation on the sum variable.

3. Data Scope:

OpenMP allows developers to specify how variables are shared or private among threads. The shared and private clauses can be used for this purpose.

#include <omp.h>

#include <stdio.h>

int main() {

int sharedVar = 0;

#pragma omp parallel shared(sharedVar)

{

// sharedVar is shared among all threads

#pragma omp for

for (int i = 0; i < 10; ++i) {

sharedVar += i;

}

// Each thread has its private copy of privateVar

int privateVar = omp_get_thread_num();

printf("Thread %d: privateVar = %d\n", omp_get_thread_num(), privateVar);

}

printf("sharedVar = %d\n", sharedVar);

return 0;

}4. Parallel Sections:

Another way to create parallelism is through sections, where different blocks of code are executed in parallel.

#include <omp.h>

#include <stdio.h>

int main() {

#pragma omp parallel sections

{

#pragma omp section

{

// Code for section 1

printf("Section 1\n");

}

#pragma omp section

{

// Code for section 2

printf("Section 2\n");

}

}

return 0;

}5. Setting number of Threads

You can use the omp_set_num_threads function to specify the number of threads within a specific parallel region in your code. Here’s an example:

#include <omp.h>

#include <stdio.h>

int main() {

// Set the number of threads for the entire program to 4

omp_set_num_threads(4);

#pragma omp parallel

{

// The code inside this parallel region will be executed by 4 threads

printf("Hello from thread %d\n", omp_get_thread_num());

}

return 0;

}In this example, the omp_set_num_threads(4) call sets the number of threads to 4 for the entire program.

You can also control the number of threads by setting the OMP_NUM_THREADS environment variable before running your program. This method allows you to specify the number of threads without modifying your code. Here’s an example using a Linux-like command:

export OMP_NUM_THREADS=4

./your_programOr, on Windows command prompt:

set OMP_NUM_THREADS=4

your_program.exeRemember, the actual number of threads used may be less than or equal to the specified number, depending on the system’s capabilities and the dynamic adjustment of threads by the OpenMP runtime.

Compiling OpenMP Programs

To compile programs with OpenMP directives, you need to enable OpenMP support during compilation through a special -fopenmp flag. For example, using GCC:

gcc -fopenmp your_program.c -o your_programFor more information on OpenMP, you may check their “Tutorials & Articles” section and their listed Books